|

Now, run Chrome (or Chromium), and go to the following URI: chrome://inspect. F12 is the key that I type the most after Ctrl+C and Ctrl+V (because I mostly do Stack Overflow-Driven Development - just kidding).ĭid you know that you can use the same Dev Tools to inspect Node.js applications? Node.js and Chrome run the same engine, Chrome V8, which contains the inspector used by the Dev Tools.įor educational purposes, let's say that we have the simplest HTTP server ever, with the only purpose to display all the requests that it has ever received:ĭebugger listening on ws://127.0.0.1:9229/655aa7fe-a557-457c-9204-fb9abfe26b0f During my debugging quest, I discovered siege an HTTP/FTP load tester and benchmarking utility, pretty useful when it comes to measuring memory under heavy load.Īlso, resist the urge to enable developer tools or verbose loggers if they are not necessary, otherwise you'll end up debugging these dev tools!Īccessing Node.js Memory Using V8 Inspector & Chrome Dev Tools

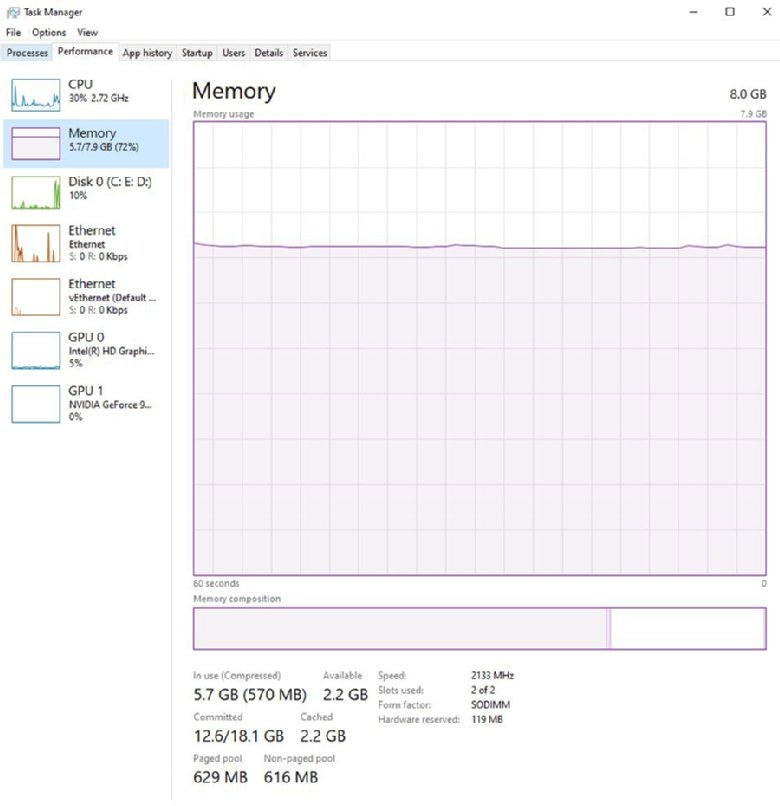

To this end, feel free to grab production logs, and send the same requests to the test environment. Ideally, it's better to use the same deployment artifact, so you can be certain that there is no difference between the production and the new test environment.Ī duly configured test environment is not enough: it should run the same load as the production, too. The code should be built, optimized, and configured the exact same way as when it runs on production in order to reproduce the leak identically. It can be a Virtual Machine, or an AWS EC2 instance, but it needs to repeat the exact same conditions as in production. Now that we're comfortably seated, with a cup of tea and a few hours ahead, let's dig into the tools that'll help you find these little RAM squatters.īefore measuring anything, do yourself a favor, and take the time to set up a proper test environment. A rational way (in the short term) to postpone the problem is to restart the application before it reaches the critical bloat.įor PM2 users, the max_memory_restart option is available to automatically restart node processes when they reach a certain amount of memory. If your backlog can't accomodate some time to investigate the leak in the near future, I advise to look for a temporary solution, and deal with the root cause later. What can't be measured can't be fixed.įinding and fixing a memory leak in Node.js takes time - usually a day or more. Otherwise, I highly recommend taking a look at New Relic, Elastic APM, or any monitoring solution. Needless to say, I assumed that you monitor your server. Memory leaks aren't always that obvious, but when this pattern appears, it's time to look for a correlation between the memory usage and the response time.Ĭongratulations! You've found a memory leak. When the memory is full, and there is not enough swap left, the server can even fail to accept SSH connections.īut when the application is restarted, all the issues magically vanish! And nobody understands what happened, so they move on other priorities, but the problem repeats itself periodically.

Insidiously, the response time becomes higher and higher, until a point when the CPU usage reaches 100%, and the application stops responding. The first symptom of a memory leak on a production application is that memory, CPU usage, and the load average of the host machine increase over time, without any apparent reason.

They become a problem when someone pays extra attention to the production performance metrics. Fixing memory leaks may not be not the shiniest skill on a CV, but when things go wrong on production, it's better to be prepared!Īfter reading this article, you'll be able to monitor, understand, and debug the memory consumption of a Node.js application.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed